For the sole purpose of gathering data from the web, software called “web scraping tools” has been created. Some other names for these programs include web harvesters and web data extraction programs. Anyone attempting to glean information from the World Wide Web will find these instruments helpful. Web scraping is the modern method of data entry because it eliminates the need for typing and pasting.

These programs actively seek out new information, whether that’s done manually or automatically, and then collect and organize any relevant updates. Using a scraping tool, one can, for instance, collect data about products and their prices from Amazon.

In this post, we’ll go over 5 of the best web scraping tools you can use to gather data with no coding required.

When to use Web Scraping Tools?

It’s impossible to list all of the possible applications for web scraping tools, so we’ll stick to a few that are most likely to be encountered by the tool’s intended audience.

1. Collect data for market research

As a potent instrument for market research, web scraping tools can help you stay abreast of the future of your company or industry over the next six months.

Data from numerous sources, including analytics vendors and market researchers, can be gathered by the tools and organized in a single location for convenient access and study.

2. Extract contact information

Using these instruments, you can gather contact information from the Internet, including emails and phone numbers, which can be used to create a comprehensive directory of suppliers, manufacturers, and other parties of interest to your company.

3. Download solutions from StackOverflow

Solutions can be downloaded for later offline reading or storage using a web scraping tool, which collects data from various sites (including StackOverflow and more Q&A websites).

Because the resources are available even without an active Internet connection, this results in less reliance on having one.

4. Look for jobs or candidates

As a resource for both employers seeking to fill open positions and individuals currently seeking new employment opportunities.

These methods are also useful for quickly retrieving specific information based on a set of predefined criteria and eliminating the need for laborious manual searches.

5. Track prices from multiple markets

A web scraping tool is a necessity if you enjoy doing your shopping online and keeping tabs on the price of the items you’re interested in across various markets and retailers.

Examples of great Web Scraping Tools

Let’s check out the top web scraping software options. Some of them are completely free, while others have free trials and paid upgrades. Be sure to do your research before committing to anyone for your needs.

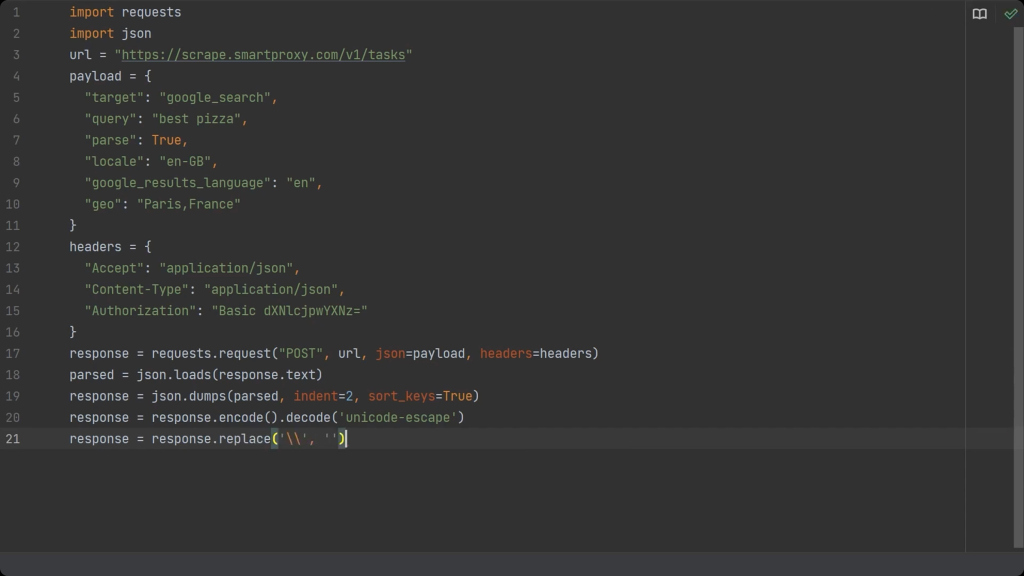

1. Smartproxy SERP Scraping API

Without the right infrastructure, web scraping Google search results pages can be a major hassle. The Smartproxy SERP scraping API is an excellent tool for this purpose. This Search Engine Results Page API incorporates a sizable proxy network, web scraper, and data parser.

To obtain structured data from the most popular search engines, all you need to do is send a single API request, and this comprehensive solution will guarantee a positive response every time.

You can narrow your search to a specific country, state, or city and receive either unprocessed HTML or parsed JSON results. Smartproxy’s search engine proxies can be used for a wide range of tasks, including real-time monitoring of keyword rankings and other SEO metrics, retrieval of paid and organic data, and price tracking.

For $100 per month plus tax, you can have access to them.

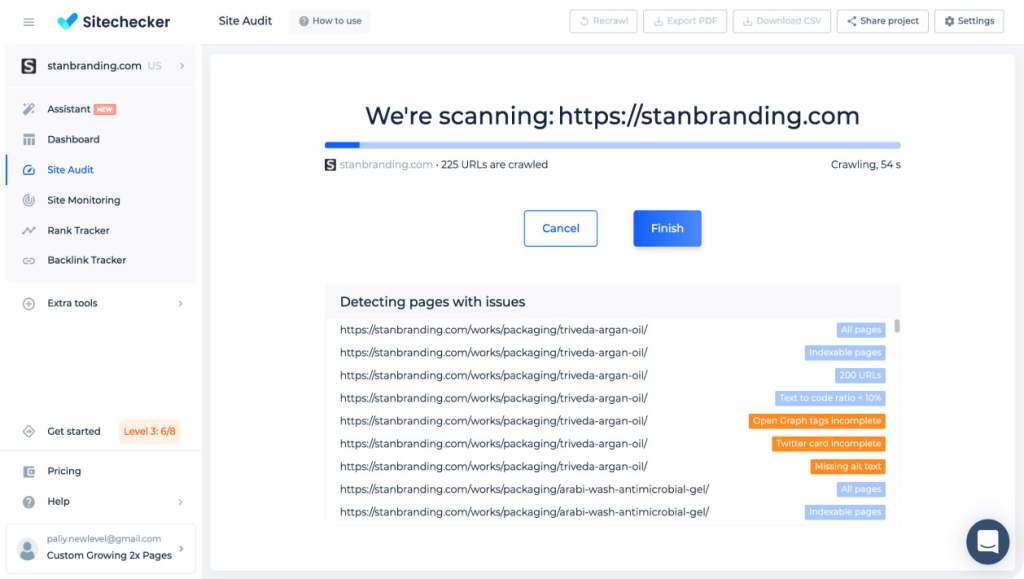

2. Sitechecker

Sitechcker is a web crawler service that operates in the cloud to instantly analyze your website’s technical SEO aspects. The tool can crawl up to 300 pages in 2 minutes on average, checking every internal and external link, and displaying the results in a detailed report on the control panel.

Get a trustworthy website score that reflects the condition of your site after customizing the crawler rules and filters to your specifications.

In addition, it will email you whenever there is a problem on your site, and you can work together with your team and contractors by sending a link to the project.

The Scraper APIs provided by Oxylabs can glean information from even the most convoluted websites. It excels at large-scale web scraping operations. SERP Scraper API, E-Commerce Scraper API, Real Estate Scraper API, and Web Scraper API are the four available Scraper application programming interfaces.

For optimal performance and user satisfaction, we’ve tailored each Scraper API to a unique set of targets. Costs as low as $99 per month. The following are a part of every Scraper API:

With this model, payment is made only when desired outcomes are achieved.

Quick and simple access to language-specific media.

Simple expansion to meet new demands.

Largest proxy list available (102M+).

Transmission of information to a cloud storage folder (AWS S3 or GCS).

Get around geographical restrictions with little hassle and hardly any CAPTCHAs or IP blocking.

Accessible via chat and email around-the-clock You can try it risk-free for 7 days.

Your credit card won’t be charged.

Modes of pricing: $5 for 5,000 pages and 5 results per page Monthly fee of $99 includes 29,000 pages and 15 search results. 160K pages per month, 50 results per page, $399/month business plan. Pricing for businesses: $999/mo. for access to 526K pages and 100 results/s.

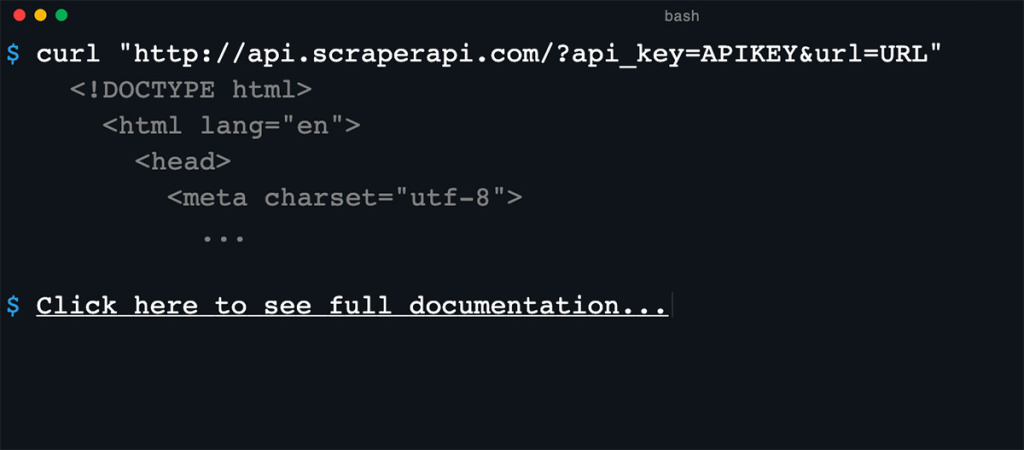

4. Scraper API

The goal of the Scraper API is to make web scraping easier. The proxy, browser, and CAPTCHA configurations can all be handled by this API.

Well-known programming languages like Bash, Node, Python, Ruby, Java, and PHP are all supported. Some of the most important features of the Scraper API are:

It can be altered to your liking (request type, request headers, headless browser, IP geolocation).

Switching IP addresses.

In the millions; more than 40 million IPs.

Allows JavaScript to be rendered.

The bandwidth is unlimited, and the speeds can reach 100 MBit/s.

More than 12 locations available, and simple to implement.

Four different subscription tiers are available for the Scraper API: Hobby ($29/month), Startup ($99/month), Business ($249/month), and Enterprise ($499/month).

5. Scrapingdog

According to the company’s claims, Scrapingdog’s proxy API is among the quickest available for extracting data from the web. Using its database of more than 40 million IPs, the tool will route each scraping request through a different IP, preventing any potential blocks.

In addition, the tool utilizes headless Chrome, which allows users to extract data from JavaScript-rendered websites. To collect information from a specific website, you can write a script specifically for that purpose.

Superior Web Data Extractor Scalability

Proxies that spin and lack heads Chrome makes data collection effortless.

Google and LinkedIn both need more APIs.

Zero-code usability that’s a breeze to implement

Data capture API that can capture full or partial data screenshots

Modes of pricing: For the first one thousand API calls, the service is free; after that, pricing starts at $30/month for Lite, $90/month for Standard, $200/month for Pro, and $500+/month for Enterprise.

Bonus: A few more…

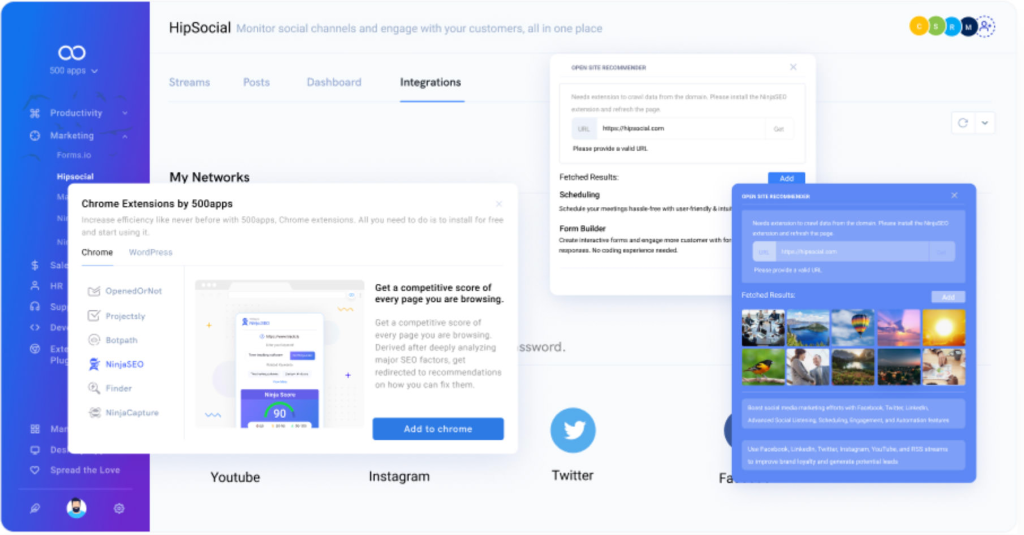

Using HipSocial, you can quickly and easily scrape the web for engaging content to share on social media. The tool lets you scrape information from specific websites and share it on the most popular social networks with a single click.

The tool’s Chrome extension bot, NinjaSEO Bot, lets you scrape massive amounts of data with zero programming. You can also scrape images that relate to your brand or your client’s brand, in addition to the textual content.

In addition to its social media analytics tool, HipSocial also boasts a social listening feature for gauging the success of your social media communication initiatives.

Cloud plans start at $14.99/month, while Enterprise plans cost $74.95/month with HipSocial’s “one price for 50 apps pricing package.”

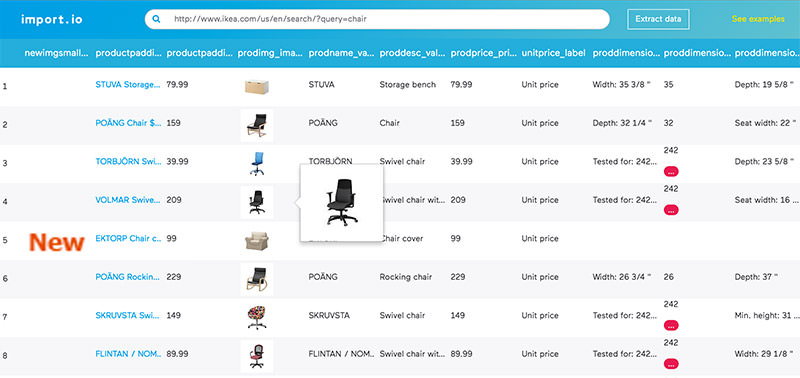

Data can be imported from a specific web page and exported to CSV using Import.io’s builder. Without touching a single line of code, you can quickly and easily scrape thousands of web pages in order to construct one thousand or more APIs tailored to your needs.

Every day, Import.io uses cutting-edge technology to retrieve millions of data, which companies can use for low prices. In addition to the web-based interface, there are also free apps available for Windows, macOS, and Linux that can be used to create data extractors and crawlers, download data, and synchronize with the online account.

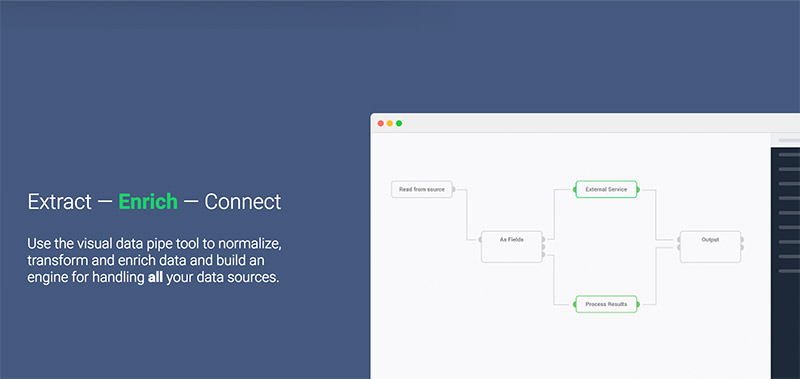

Dexi.io (formerly known as CloudScrape)

Like Webhose, CloudScrape doesn’t require any downloading in order to collect data from any website. Crawlers can be configured and data extracted in real time using the integrated browser-based editor. Google Drive, Box.net, and other cloud storage services are suitable for archiving collected data, or you can export the information as CSV or JSON.

CloudScrape also allows users to access data anonymously by providing access to a network of proxy servers. After two weeks, CloudScrape will archive the data that you have stored with them. The web scraper costs $29 per month and provides 20 free scraping hours.

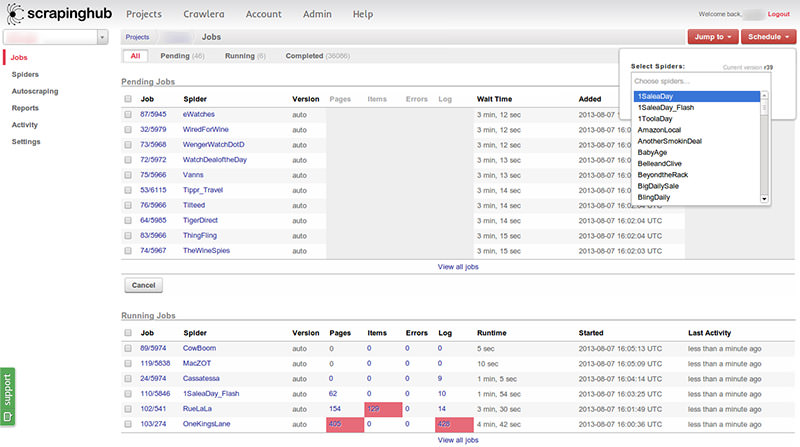

Thousands of programmers rely on Zyte (previously Scrapinghub), a data extraction tool hosted in the cloud. To easily crawl massive or bot-protected sites, Zyte employs Crawlera, a smart proxy rotator that supports bypassing bot counter-measures.

Zyte processes an entire webpage and transforms it into a structured format. If its crawl builder is unable to meet your needs, you can contact its support staff for assistance. There is a free plan that only allows for one concurrent crawl, but a premium plan that allows for up to four concurrent crawls for $25 per month.

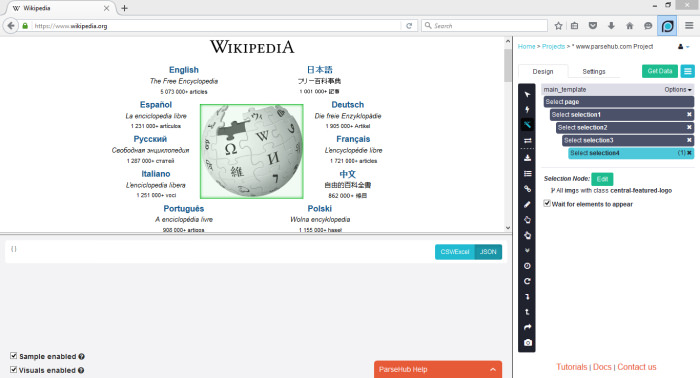

ParseHub supports JavaScript, AJAX, sessions, cookies, and redirects while crawling a single or multiple websites. To identify even the most complex documents on the web and create an output file in the correct format, the app makes use of machine learning technology.

In addition to its web app, ParseHub also has a free desktop application for Windows, macOS, and Linux that allows you to run up to five crawl projects on its most basic plan. There is a premium plan available for $89 per month that includes support for 20 projects and 10,000 web pages per crawl.

If you’re a web developer who needs to scrape data from a URL, ScrapingBot is a fantastic web scraping API to use. Particularly helpful on product pages, it gathers all the information you (image, product title, product price, product description, stock, delivery costs, etc..). It’s a helpful tool for anyone who needs to gather and maintain accurate merchandising data or aggregate product information.

Real estate, Google search, and social media data are just a few of the many niche APIs that ScrapingBot provides (LinkedIn, TikTok, Instagram, Facebook, Twitter).

Features

- Headless chrome

- Response time

- Concurrent requests

- Allows for large bulk scraping needs.

Pricing

Free to use with 100 credits every month. The first package at €39, €99, €299 then €699 per month.

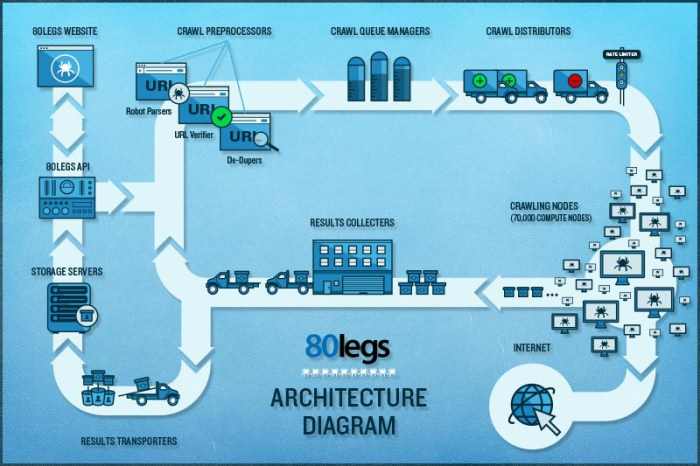

80legs is a highly effective and customizable web crawling tool. It allows for the retrieval of large datasets and provides the option to immediately download the retrieved data. Major companies like MailChimp and PayPal use the web scraper, which claims to crawl 600,000+ domains.

The ‘Datafiniti’ feature expedites searches across all available data. High-speed, efficient data retrieval from the web is just a few seconds away with 80legs’s web crawling service. It has a free tier that allows you to crawl up to 10,000 URLs per month, and a paid introductory tier that allows you to crawl up to 100,000 URLs per month for just $29.

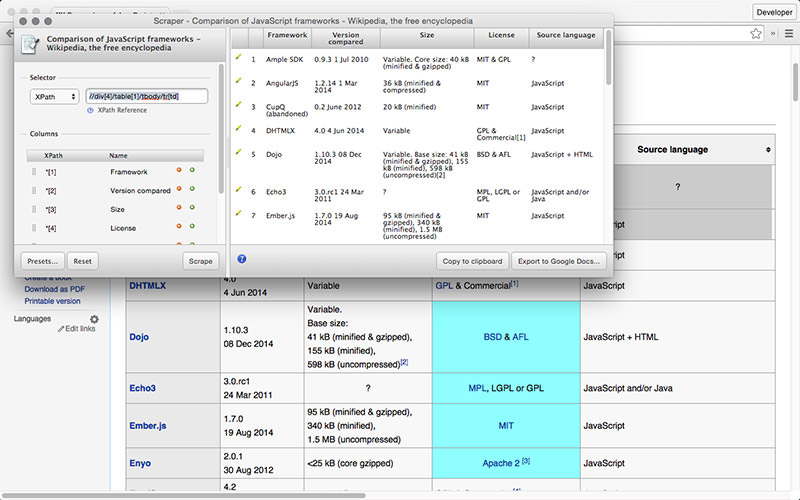

Scraper is a Chrome extension that facilitates online research and the export of data to Google Spreadsheets despite its limited data extraction features. All users, from novices to seasoned pros, will appreciate this tool’s ease of use and the fact that data can be copied to the clipboard or saved in spreadsheets via OAuth.

Scraper is a no-cost browser extension that creates compact XPath definitions of URLs to crawl automatically. It’s a plus for newcomers because there’s no need to deal with complicated configuration, but it lacks the convenience of automatic or bot crawling offered by tools like Import, Webhose, and others.

Which web-scraping tool or extension do you find most useful? In what ways would you like to mine the web for information? Please tell us about your experience in the space provided for that purpose below.